AutoGen

Unverified verified 22 may 2026A framework for building AI agents and applications

TL;DR

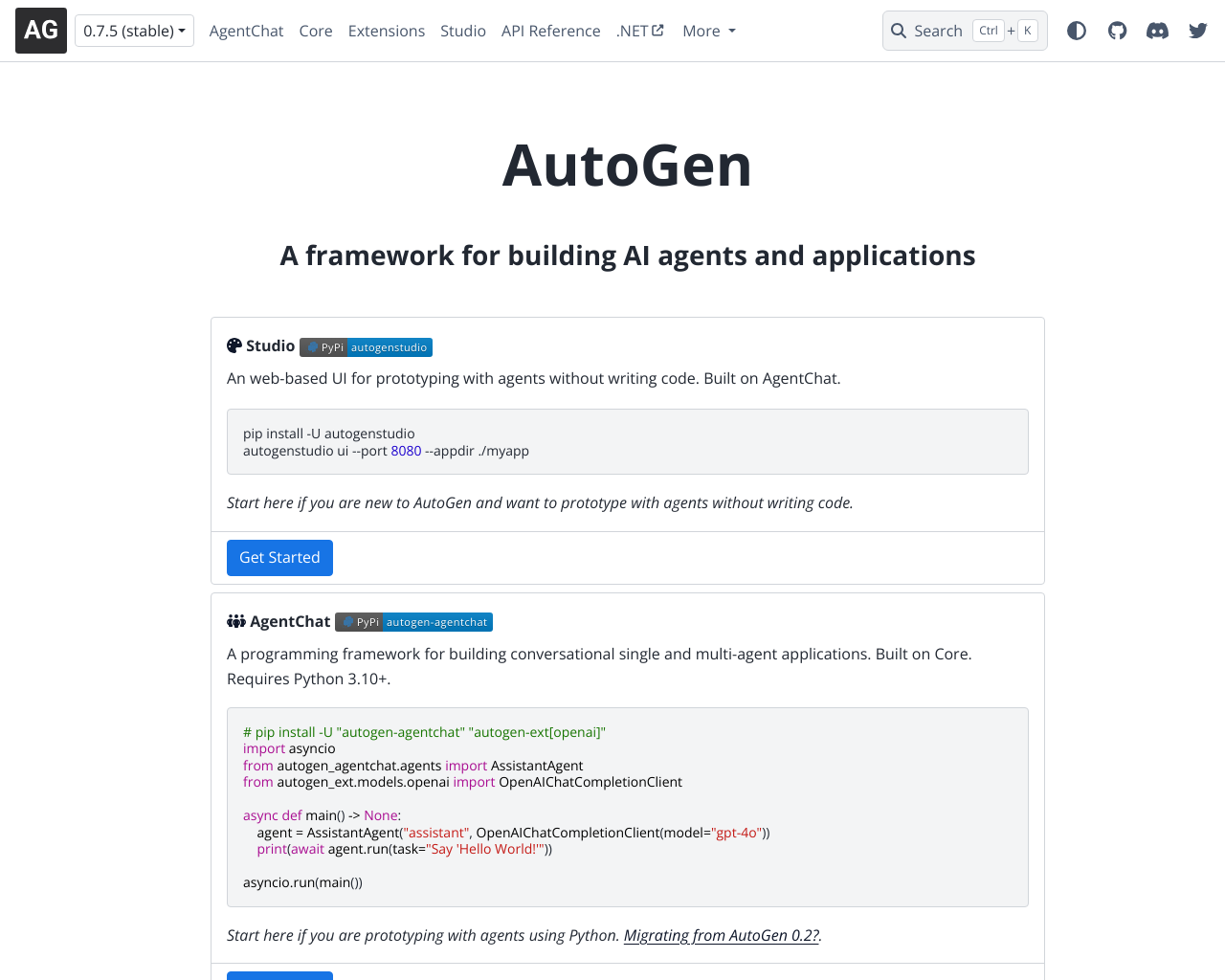

AutoGen is a high-performance framework developed by Microsoft Research for building multi-agent AI systems that collaborate through conversational patterns. It empowers developers to orchestrate specialized agents that can autonomously execute code, use tools, and interact with humans to solve complex tasks.

What Users Actually Pay

No user-reported pricing yet.

Our Take

AutoGen has solidified its position as the 'power user' framework in the agentic AI market, particularly with the release of version 0.4. While competitors like CrewAI focus on role-based hierarchies and LangGraph on deterministic state machines, AutoGen excels in dynamic, multi-turn conversational collaboration. Its recent architectural shift to an Actor Model provides the scalability required for enterprise-grade, distributed agent networks, moving it beyond simple prototyping into production-ready territory. However, the framework suffers from a significant 'identity crisis' caused by its rapid evolution. The transition from the 0.2 API to the 0.4 rewrite has left a trail of fragmented documentation and breaking changes that can frustrate new developers. Furthermore, a high-profile package name dispute on PyPI (where the 'pyautogen' name is now controlled by a fork rather than Microsoft) adds a unique layer of confusion for installation and dependency management. For teams already deep in the Microsoft ecosystem or those requiring robust, sandboxed code execution (via its native Docker integration), AutoGen is the clear frontrunner. It is best suited for R&D departments and software engineers building complex, non-linear workflows where agents must debate, iterate, and refine their own outputs without constant manual oversight.

Pros

- + Native support for sandboxed code execution using Docker, allowing agents to safely write and run their own scripts.

- + Powerful multi-agent conversation patterns including GroupChat, Society of Mind, and hierarchical delegation.

- + High scalability through a new event-driven, asynchronous architecture (Actor Model) that supports distributed agents across different processes.

- + Direct integration with Microsoft's broader ecosystem, including Azure OpenAI, .NET support, and Model-Context Protocol (MCP) servers.

- + Active research-backed development ensuring it stays at the forefront of agentic paradigms like Magentic-One.

Cons

- - Significant fragmentation between version 0.2 and 0.4, causing confusion for developers following older tutorials.

- - Unusual package management situation on PyPI; users must now use 'autogen-agentchat' as the 'pyautogen' package was taken over by a fork (AG2).

- - Steep learning curve compared to 'low-code' alternatives, requiring strong Python proficiency and an understanding of asynchronous programming.

- - Documentation can be dense and occasionally lags behind the rapid-fire release cycle of the core framework.

Sentiment Analysis

Sentiment has remained stable since last capture. Sentiment has improved from 0.55 to 0.62 following the stable release of version 0.4. While developer excitement for the technical architecture and Microsoft backing is high, the overall score is tempered by persistent frustration regarding the PyPI package naming conflict and the effort required to migrate legacy code.

Sentiment Over Time

By Source

85 mentions

Sample quotes (2)

- "Once you get the hang of the nuances, it's really robust and configurable. Doesn't have the same library of plugins as LangChain, but they actually work all the time."

- "The package confusion is unfortunate... the pyautogen and autogen packages in PyPI have been 'hijacked' to point to a fork."

120 mentions

Sample quotes (2)

- "AutoGen 0.4 is a game-changer for distributed AI agents. The actor model architecture finally brings the scale we need."

- "Building a complex multi-agent system? AutoGen is still the most flexible way to get agents talking to each other."

350 mentions

Sample quotes (2)

- "The most forward-looking agent framework out there in terms of architecture."

- "Great work on the 0.4 rewrite! The event-driven model makes observability much easier."

Agent Readiness

52/100AutoGen is highly 'agent-ready' for developers building custom infrastructure, specifically due to its built-in Docker execution environment and gRPC-based distributed runtime. However, it lacks out-of-the-box connectors for consumer automation platforms like Zapier or Make, as it is positioned as a foundational SDK rather than a SaaS product. Its readiness is highest for enterprise developers who can leverage the new AutoGen Studio for low-code prototyping before deploying to a custom backend.

Last checked Apr 28, 2026

MCP Integrations

1 serverCreate and manage AI agents that collaborate and solve problems through natural language interacti…

Last checked May 18, 2026

Screenshot

[ features ]

Prompt Management

Editing and tracking of LLM prompts

Allows to version prompts and track / compare different variants over time

Compliance & Security

Security certifications, compliance features, and access control capabilities.

SOC 2 Type I or Type II certification.

ISO 27001 information security certification.

Built-in tools for GDPR compliance (data export, deletion, consent).

Complete audit log of all data changes.

Granular permissions based on user roles.

Single Sign-On integration support.

AI Engine Coverage

Coverage and support for various AI models, LLMs, and search engines.

List of AI models and LLMs supported for tracking (e.g., ChatGPT, Gemini).

How often metrics are updated (e.g., real-time, daily).

Support for tracking in multiple countries or regions.

Orchestration Capabilities

Core features for coordinating and executing AI agent workflows.

Supports orchestration of multiple collaborating agents.

Maintains agent state and memory across interactions.

Automatically routes requests across multiple LLM providers.

Supports agents calling external tools or functions.

Deployment & Scalability

Deployment models and scalability features for production use.

Primary way to deploy and run the orchestration.

Supports multiple teams or users from single deployment.

Automatic scaling for high-load agent workflows.

Compatible with serverless/serverless-like deployments.

Observability & Monitoring

Tools for tracking performance, costs, and debugging agent runs.

Monitors and budgets LLM usage costs per run.

Detailed traces of agent steps and decisions.

Visual graphs or dashboards of agent flows.

Metrics like latency, throughput for agent executions.

Developer Experience

Tools and abstractions easing agent development and iteration.

No-code/low-code UI for designing agent workflows.

OpenAI API-compatible endpoints or SDKs.

Available as open-source with community contributions.

Programming languages with official SDK support.

Compare With

Reviews

No reviews yet. Be the first to review AutoGen!