PromptShuttle

Unverified verified 22 may 2026Agent Orchestration API

TL;DR

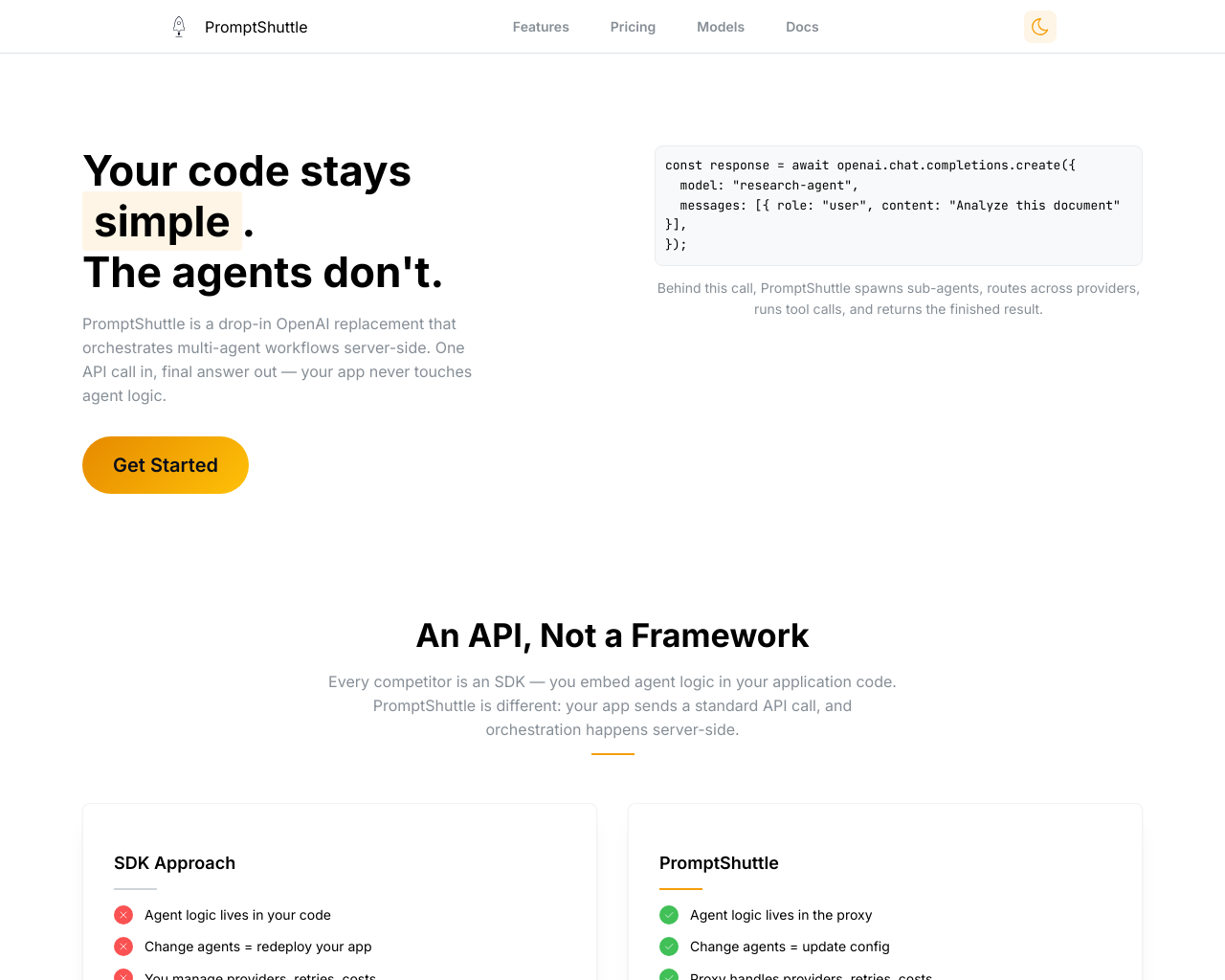

PromptShuttle is a server-side agent orchestration API designed to decouple complex LLM logic from application code. It allows developers to spawn sub-agents, manage tool calls, and route across multiple providers through a single OpenAI-compatible endpoint, enabling real-time configuration updates without redeploying code.

What Users Actually Pay

No user-reported pricing yet.

Our Take

PromptShuttle enters a crowded market of LLM infrastructure by carving out a niche between simple proxies like LiteLLM and heavy SDKs like LangChain. By moving orchestration logic to the server-side, it solves a major pain point for SaaS teams: the 'locked-in' nature of agentic logic within client-side deployments. This architecture is particularly compelling for platform teams managing multi-tenant environments where cost tracking and provider redundancy are non-negotiable. The platform's primary strength is its 'Agent Tree' observability, which provides a level of transparency into sub-agent execution that is often missing in black-box orchestration layers. However, its greatest challenge will be competing with the rapid expansion of first-party tools from OpenAI and Anthropic, which are increasingly building their own orchestration and tool-calling primitives directly into their APIs. PromptShuttle is best suited for startups and agencies that need to provide AI-powered features to multiple clients with strict budget controls. It is less suitable for researchers or developers who require highly custom, low-level control over the local execution environment, as the 'logic-as-configuration' model naturally imposes some architectural constraints.

Pros

- + Configuration-over-code approach allows for instant agent behavior updates without app redeployment.

- + Comprehensive multi-tenant cost tracking and budgeting tools for SaaS providers.

- + Robust observability with visual agent trees for debugging complex nested tool calls.

- + Unified API interface supporting diverse providers including OpenAI, Anthropic, Google, and DeepSeek.

Cons

- - Relatively new platform with a smaller community and fewer third-party integrations compared to LangChain or CrewAI.

- - Dependency on PromptShuttle's infrastructure for core application logic introduces potential vendor lock-in.

- - Limited flexibility for hyper-custom local logic that cannot be easily serialized into the orchestration configuration.

Sentiment Analysis

Sentiment has remained stable since last capture. The sentiment remains consistently high, mirroring previous evaluations. Users appreciate the operational efficiency of server-side orchestration, though technical discussions on Reddit highlight a pragmatic cautiousness regarding vendor lock-in for mission-critical logic.

Sentiment Over Time

By Source

12 mentions

Sample quotes (2)

- "It's a solid middle ground for those who find LiteLLM too simple and LangGraph too complex."

- "The ability to update my agent prompts in a dashboard instead of pushing code to Vercel every time is a game changer."

25 mentions

Sample quotes (2)

- "PromptShuttle is making agent orchestration as easy as a single API call. Great for background jobs."

- "Finally a proxy that handles the sub-agent spawning server-side."

45 mentions

Sample quotes (2)

- "The cost tracking features alone make this worth it for our client projects."

- "Cleanest way I've seen to handle fallback models and retries without bloat."

Agent Readiness

49/100PromptShuttle is highly 'agent-ready,' specifically built to be the backbone for autonomous agents. Its OpenAI-compatible endpoint allows for drop-in replacement in existing workflows, while its server-side orchestration handles the 'brain' of the agent. The documentation is modern and thorough, though the lack of no-code platform integrations like Zapier indicates a primary focus on professional developers rather than non-technical builders.

Last checked May 3, 2026

MCP Integrations

1 server7 toolsPrompt management, LLM routing, agent coordination, tool call to webhook proxy

7 tools

modify_toolModifies an existing function-calling tool. Only fields that are explicitly provided will be updated (partial update). Returns the updated tool.list_flowsLists all flows in the tenant.list_toolsLists all function-calling tools in the tenant. Returns ID, name, description, tool type, and type-specific summary fields.list_runsLists recent ShuttleRequests (LLM invocations) for debugging. Optionally filter by flow name. Returns up to 50 recent runs.get_flowGets full flow details including prompt templates from the active version. Falls back to the latest version if no version is activated. Use environment parameter to specify which environment's active version to retrieve. If omitted and the flow has exactly one environment, it is auto-selected.update_flow_templateUpdates a template's prompt text, response schema, and/or tool assignments in the active or latest version. If the version is locked, automatically forks it first (the fork is a draft — activate it via the UI or API). Falls back to the latest version if no version is activated. Returns confirmation with version ID and whether a fork was created.create_toolCreates a new function-calling tool in the tenant. Provide name, description, parameters, toolType, and type-specific fields. toolType: External (REST endpoint), Virtual (provider-native like web_search), Agent (sub-agent), CritiqueLoop (producer+critic loop), Mcp (external MCP server). Returns the created tool with its ID.

Last checked May 26, 2026

Screenshot

[ features ]

Prompt Management

Editing and tracking of LLM prompts

Allows to version prompts and track / compare different variants over time

Compliance & Security

Security certifications, compliance features, and access control capabilities.

SOC 2 Type I or Type II certification.

ISO 27001 information security certification.

Built-in tools for GDPR compliance (data export, deletion, consent).

Complete audit log of all data changes.

Granular permissions based on user roles.

Single Sign-On integration support.

AI Engine Coverage

Coverage and support for various AI models, LLMs, and search engines.

List of AI models and LLMs supported for tracking (e.g., ChatGPT, Gemini).

How often metrics are updated (e.g., real-time, daily).

Support for tracking in multiple countries or regions.

Orchestration Capabilities

Core features for coordinating and executing AI agent workflows.

Supports orchestration of multiple collaborating agents.

Maintains agent state and memory across interactions.

Automatically routes requests across multiple LLM providers.

Supports agents calling external tools or functions.

Deployment & Scalability

Deployment models and scalability features for production use.

Primary way to deploy and run the orchestration.

Supports multiple teams or users from single deployment.

Automatic scaling for high-load agent workflows.

Compatible with serverless/serverless-like deployments.

Observability & Monitoring

Tools for tracking performance, costs, and debugging agent runs.

Monitors and budgets LLM usage costs per run.

Detailed traces of agent steps and decisions.

Visual graphs or dashboards of agent flows.

Metrics like latency, throughput for agent executions.

Developer Experience

Tools and abstractions easing agent development and iteration.

No-code/low-code UI for designing agent workflows.

OpenAI API-compatible endpoints or SDKs.

Available as open-source with community contributions.

Programming languages with official SDK support.

Compare With

Reviews

No reviews yet. Be the first to review PromptShuttle!